Curiosity output: 5

4.2.2026

Trustworthy AI: Automated vs Augmented Workflows

Input: Explore and analyze a selected dimension of AI to try to do something that is designed to investigate AI capabilities, trustworthiness, ethical implications, or creative potential in distinct contexts

Introduction

Artificial intelligence is rapidly reshaping both education and the professional labor market, shifting how knowledge is produced, applied, and evaluated. As generative AI systems become integrated into academic work and professional tasks, the focus is moving from what these models can generate to how reliably and effectively they perform in real-world contexts. Recent perspectives from the Stanford Institute for Human-Centered Artificial Intelligence highlight this transition, emphasizing increasing expectations around trust, accountability, and measurable impact as AI systems mature.

Within this evolving landscape, understanding how AI functions not only as a tool, but as a collaborator, becomes critical. This project builds on that shift by examining how ChatGPT can support both research and creative workflows, while critically evaluating its performance across different levels of human involvement. The following methodology outlines a structured approach to comparing automated and human-augmented uses of AI in producing academic and presentation-based deliverables.

Practicing Methodology

This project explores the use of the April 2026 ChatGPT Pro model as both a scientific research assistant and a collaborative creative partner in the development of two deliverables: (1) a condensed research case study and (2) a presentation derived from that case study. The Pro-tier model was selected due to its positioning as a tool optimized for advanced reasoning, analytical tasks, and professional-level content generation, making it suitable for both research and creative workflows.

The study follows a sequential workflow, in which the research case study is completed first, followed by the development of the presentation. This structure reflects a realistic academic and professional process, where written analysis informs downstream communication materials.

A central component of this methodology is a comparative analysis between automated and augmented AI use, designed to evaluate how varying levels of human involvement impact output quality, trustworthiness, and efficiency.

In the automated condition, I provide ChatGPT with pre-collected research materials and a single structured prompt instructing the model to generate a complete case study within the defined word limit. No follow-up prompts, corrections, or iterative guidance are provided. The resulting output is then used as the sole input for generating a corresponding presentation using AI-based content and image generation tools.

In the augmented condition, I iteratively collaborate with ChatGPT throughout the research and writing process. This includes refining prompts, evaluating outputs, correcting errors, and guiding the development of both the written case study and presentation materials. This approach reflects a human-in-the-loop model of AI use, where outputs are shaped through ongoing interaction and oversight.

Across both conditions, all interactions are systematically documented and evaluated using predefined metrics. For the research case study, evaluation focuses on trustworthiness and reliability, including the number of flawed citations, output errors, instances of model misunderstanding, and system-level failures (e.g., freezes). For the presentation deliverable, evaluation focuses on creative effectiveness and usability, including iteration counts, successful outputs, manual intervention requirements, and rejected prompts due to complexity. Time tracking is also recorded for both conditions to assess efficiency.

This methodology enables a structured comparison between fully automated and human-augmented AI workflows, supporting a critical analysis of ChatGPT’s capabilities, limitations, and role in trustworthy AI applications.

What Happened & Findings

Initial Prompts for Automated AI

Results Automated AI

Automated Workflow Assessment (baseline)

The fully automated condition demonstrated that while ChatGPT can rapidly generate coherent and structurally organized outputs, it fails to meet the standards required for trustworthy academic and professional use without human intervention. The case study produced in a single prompt was readable and logically framed but contained significant issues, including flawed or missing citations, unclear attribution of source material, and drift from the original analytical objectives. Similarly, the automated presentation failed to maintain alignment with the input content, producing a visually formatted but contextually irrelevant output. Across both deliverables, the model showed no ability to self-correct, request clarification, or signal uncertainty, resulting in outputs that were fast but unreliable. These results suggest that fully automated use of large language models is insufficient for complex, domain-specific tasks, particularly where accuracy, transparency, and adherence to constraints are critical.

Human in the loop Workflow

Results forAugmented AI Visual Products

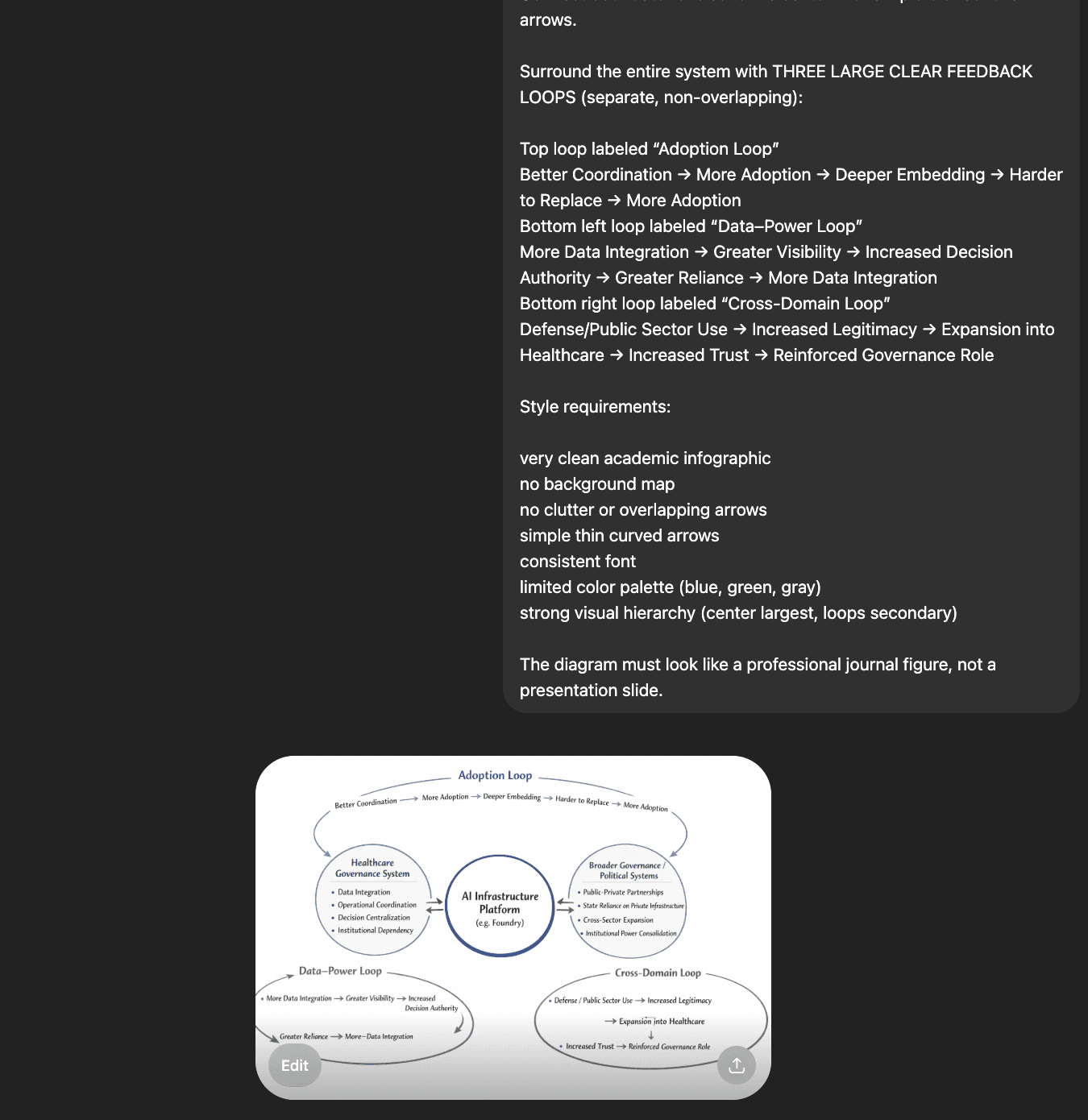

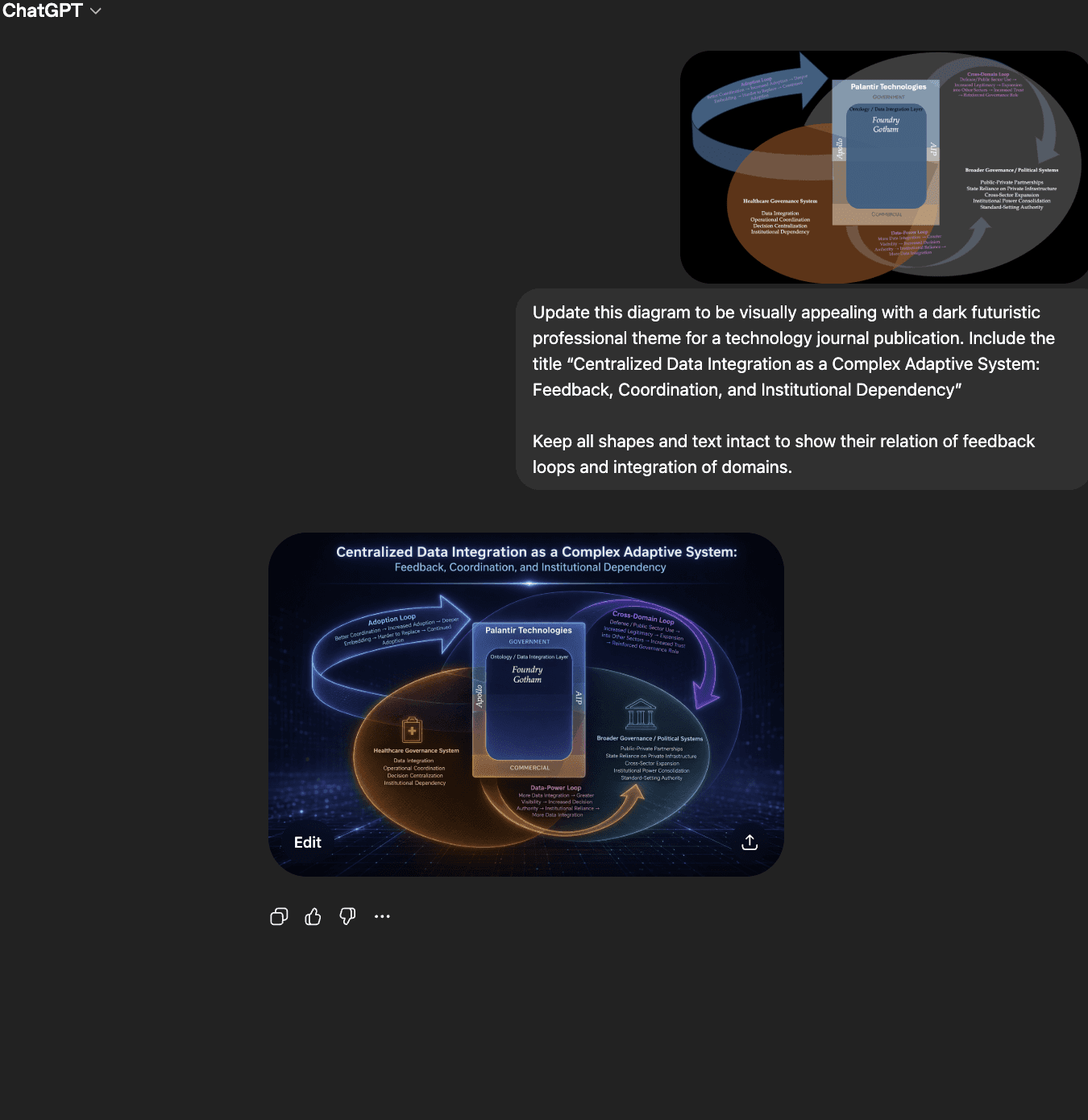

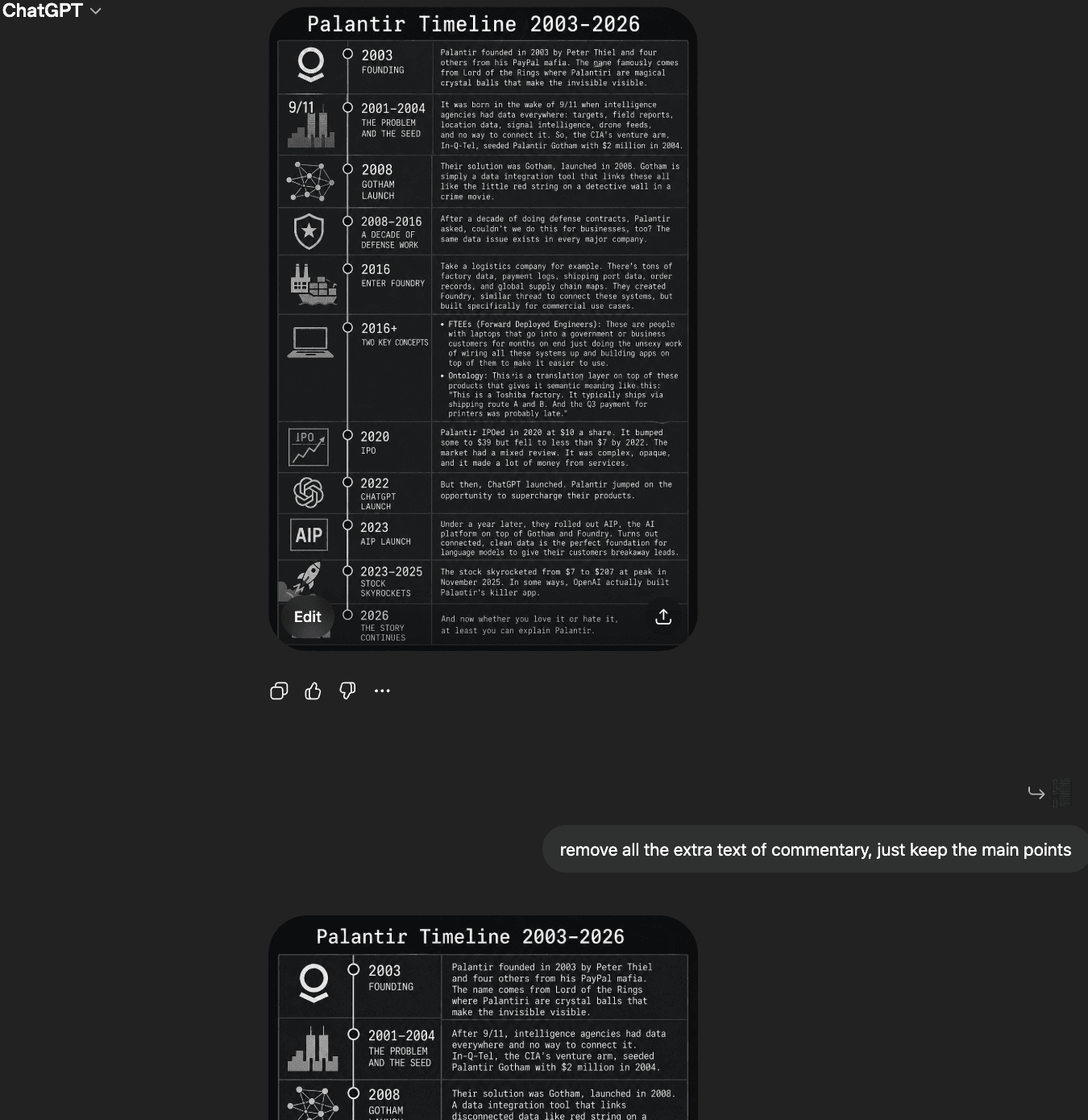

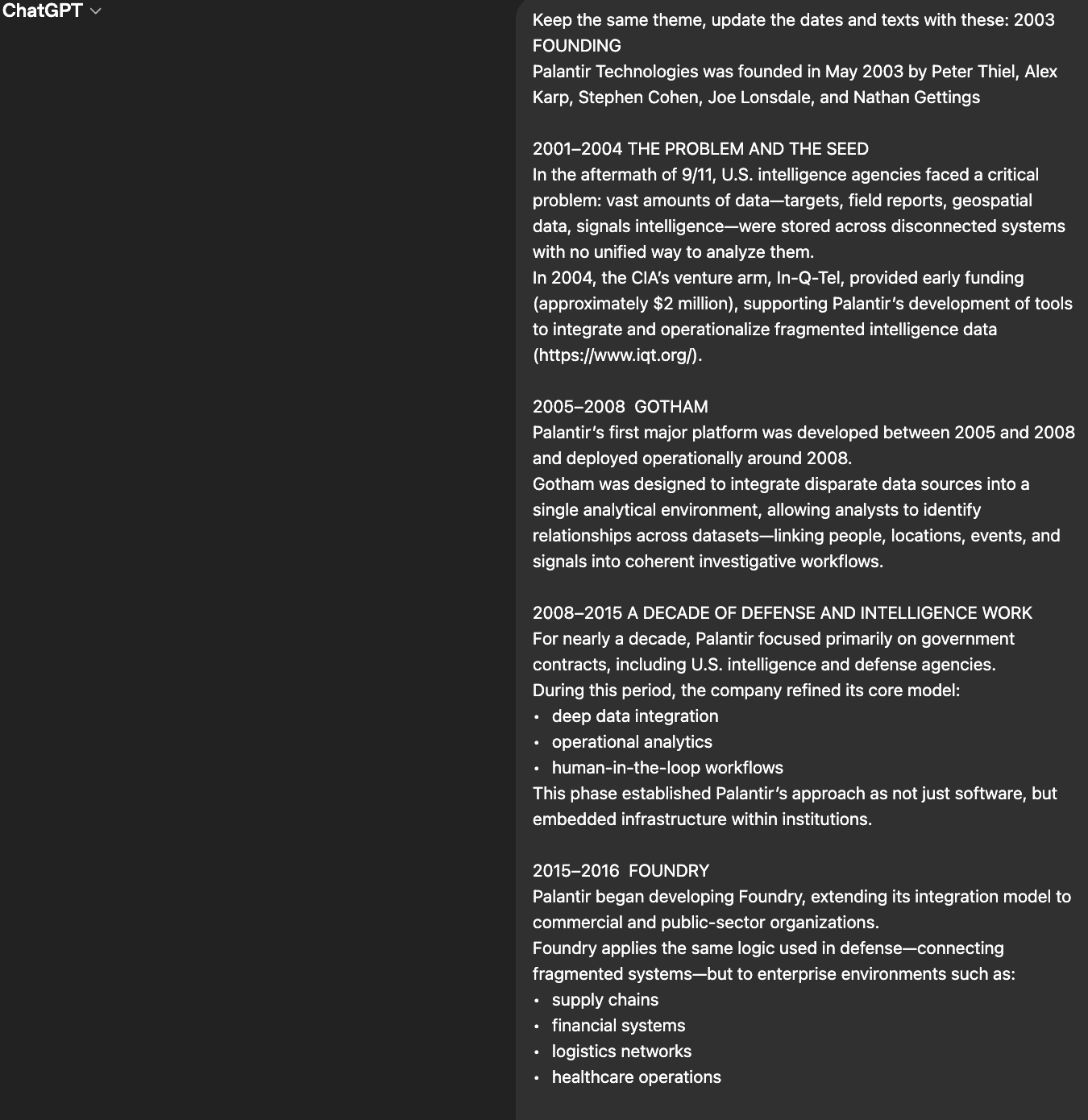

This workflow highlights a key limitation of current ChatGPT image generation: they are far better at styling than structuring. When tasked with producing highly specific visuals (system diagrams, feedback loops, or timelines with precise spatial relationships) the models struggle because they must simultaneously interpret layout logic, semantic relationships, and visual hierarchy. These are deterministic design problems, while image models operate probabilistically.

In the early stages of this process, repeated prompt iterations failed to produce usable outputs. The issues were consistent: broken loops, misplaced arrows, distorted or unreadable text, and inconsistent spatial relationships. Even when prompts were refined, the model continued to “approximate” rather than execute exact structural intent. This led to a high number of rejected outputs and demonstrated that prompt engineering alone cannot reliably enforce strict diagrammatic structure.

I shifted from prompting for generation to augmentation by manually constructing the diagram in PowerPoint. This allowed for defining exact geometry, relationships, and hierarchy. The image generator was then used only to enhance visual style (lighting, color, polish), rather than to determine structure. This significantly reduced iteration time and improved output quality.

The same pattern held in the timeline example. While less structurally complex than the system diagram, it still required multiple iterations (three) to converge on a usable result. This reinforces the broader conclusion: even moderately structured visuals require human-guided scaffolding to achieve precision.

Overall, this process demonstrates that for complex and specific use cases human input is essential for structure and logic, AI is most effective as a refinement tool, and achieving high-quality outputs requires iterative problem solving.

AI-Augmented Writing Reflection

This project became a lesson in humility. My original plan was more expansive than what was ultimately realistic. I began with the intention of producing a broad, visually rich, systems-level research project, but the process forced me to become more restrictive and intentional with my goals. The visual components in particular became highly iterative, messy, and time-consuming. Developing specific diagrams through AI image generation required far more manual problem-solving than I expected and, at times, took attention away from the actual writing and analysis.

I cannot claim that using an LLM clearly reduced the total time required for this project. It helped with organizing sources, formatting, citation placement, and later-stage editing, but those benefits still required manual review, correction, and decision-making. In some parts of the project, especially visual development, AI tools may have increased the amount of time spent because the outputs required repeated iteration and substantial human intervention.

I used ChatGPT Pro throughout the process, but not as a substitute for research, reasoning, or authorship. The scientific reasoning process remained iterative and human-led. Because I care deeply about this subject and am training myself to develop expertise across intersecting domains, the cognitive work could not be offloaded to AI tools. My own reading, synthesis, judgment, and revision remained the central components of the project.

After writing an initial free-flowing draft of approximately 8,000 words, I used ChatGPT Pro to help edit grammar, identify repetition, and reduce my arguments into a more coherent draft of approximately 6,000 words. I then shared that draft with a colleague for recommendation edits. After receiving feedback, I rewrote a second draft and stepped away from the work for several days before returning to it with more distance. For the final edit, I used ChatGPT Pro to help format the work according to publication standards and check whether the argument remained cohesive across a complex topic.

Documenting this process helped me better understand the specific ways I rely on AI language models. More importantly, it showed me how much I have moved away from accepting AI output as automatically useful or authoritative. Instead, I now approach AI as a tool that requires direction, verification, revision, and documentation. This process also clarified the importance of transparently recording how AI tools are used in research and writing, both to maintain ownership of the work and to avoid plagiarism or unclear attribution.